I recently set myself a challenge: could I build a 2D game using only AI?

➡ I Tried to Build a 2D Game Using Only AI and the Biggest Challenge Wasn’t the Code…

By Lukasz Rynski - Head of Interactive at Koffeecup

At first, that sounded like the kind of experiment that should be straightforward in 2026. AI can write code, generate art, create models, suggest mechanics, and even help with worldbuilding. On paper, the whole process feels almost automated. In reality, though, I found that AI is much better at some parts of game development than others.

The easiest part, unsurprisingly, was scripting. That is the area I am most comfortable with, so even when AI produced something imperfect, I could quickly step in and fix it.

Wrong logic, bad architecture, awkward naming, broken edge cases, none of that was a major blocker. AI was useful because it accelerated the work, but it was still operating inside a space I understood well enough to control. In that sense, using AI for programming felt less like magic and more like collaboration with a fast but inexperienced assistant. It could get me most of the way there very quickly, and I could take care of the rest.

The real challenge was assets.

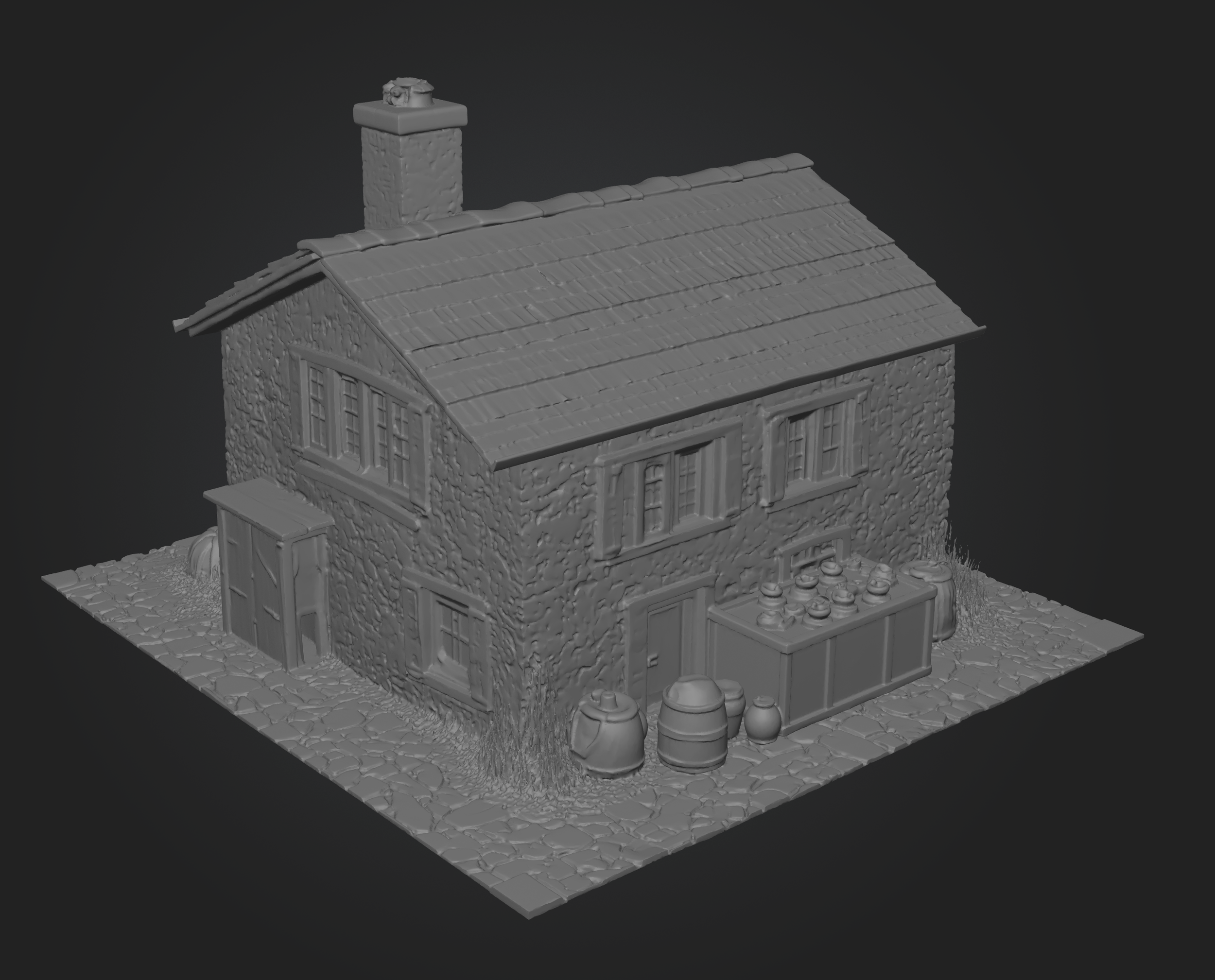

I am not an artist, so visuals were always going to be the hardest part of this experiment. My first instinct was to go fully 3D. If AI could generate meshes, maybe I could simply build the world that way and avoid the whole issue of drawing stylized 2D art. The results were promising at first glance, but the more I worked with them, the more obvious the limitations became. The generated meshes often looked less like intentional game assets and more like rough 3D scans. They were usable in a technical sense, but visually inconsistent and often time consuming to generate. Even worse, they lacked the kind of clean direction that makes a game world feel coherent.

That was the moment when I started shifting my thinking. Instead of asking AI to create finished assets from scratch, I began wondering whether it would perform better if I gave it a stronger foundation.

Rather than choosing between 3D and 2D, what if I combined them? What if the 3D models were not the final assets, but only a structural base for generating 2D visuals?

That idea led me toward an isometric approach. It felt like the right compromise, simple enough to be manageable, but visually rich enough to create an appealing environment.

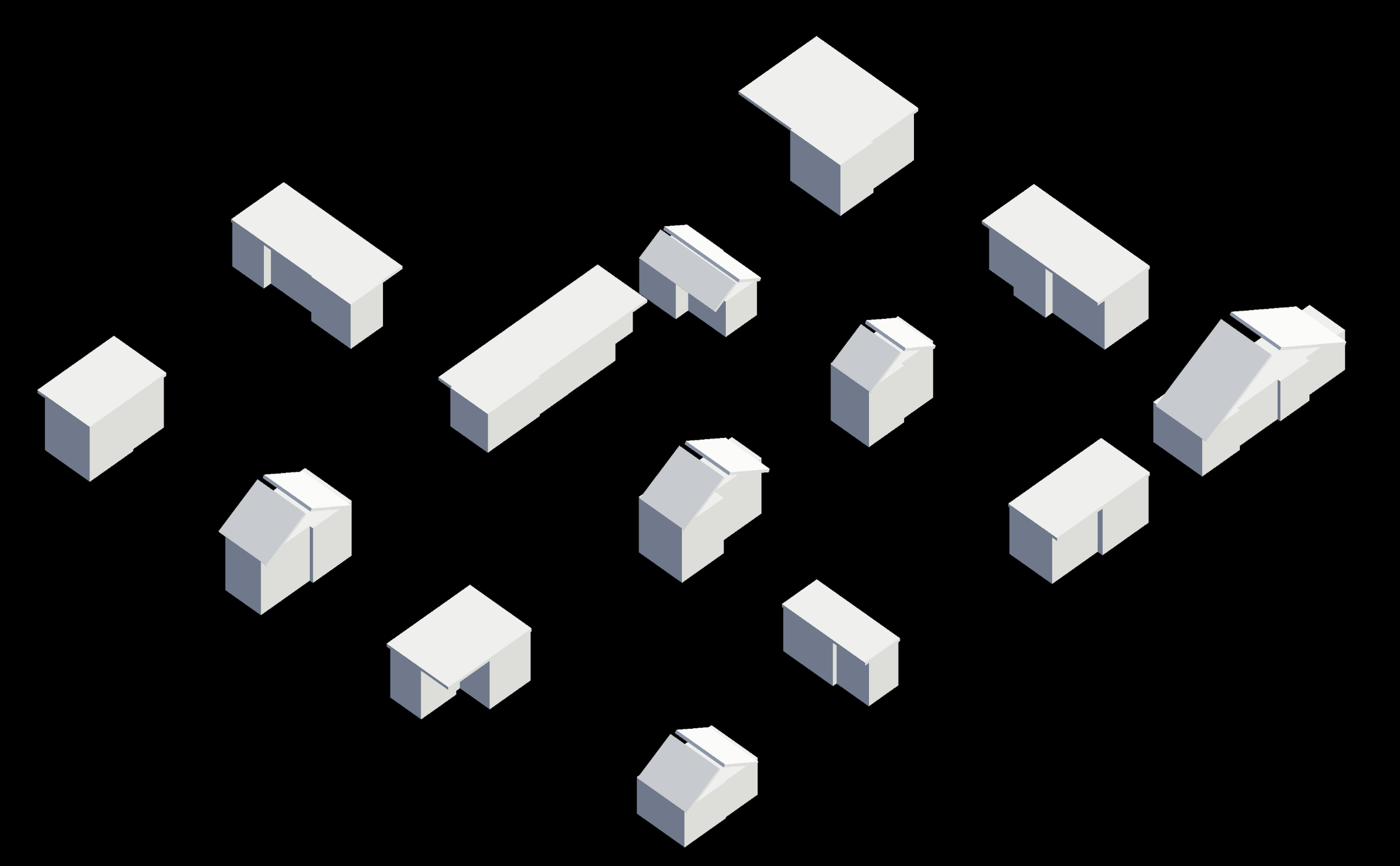

I started by building a small town and asked AI to generate a script that would create houses out of very basic building blocks, no more than three cubes and two types of roofs.

This part actually worked surprisingly well. The generated houses were not beautiful, but they did not need to be. What mattered was that they had consistent dimensions, readable silhouettes, and enough variation to serve as placeholders.

At that stage, I assumed the next part would be easy. I had simplified 3D structures, so all I needed was to generate 2D assets that matched them. In theory, it sounded elegant. Take the underlying form, cover it with AI generated sprites, and let the visual layer transform the rough geometry into something more polished. But that turned out to be much harder than I expected.

Even when AI respected the rough dimensions and position of the 3D object, the results often felt off. The perspective was inconsistent, the proportions were strange, and the final composition simply did not look good. It matched the structure, but it did not feel like a believable game asset.

One interesting advantage of this approach, however, quickly became clear. Because the 3D structure stays the same, changing the visual style becomes extremely fast.

I can take the same set of simple models and generate completely different looks on top of them, from more realistic textures to stylized or even cartoonish variations.

This makes iteration much cheaper. Instead of rebuilding assets from scratch, I can experiment with different visual directions in minutes and compare results directly on the same scene. That flexibility would be very difficult to achieve with traditional handcrafted pipelines.

That was probably the most useful lesson in the whole process. AI can follow instructions, but visual coherence is not something it automatically understands just because the geometry is correct. There is a big difference between technical alignment and artistic consistency.

So I changed the approach again. Instead of trying to generate everything procedurally and in bulk, I moved toward a more curated workflow. I stopped thinking in terms of generating a system of houses and started focusing on individual assets. If I wanted style consistency, I had to reduce the freedom I was giving the model. I tried reusing a single model from multiple rotations, hoping that would be enough to guide the generation, but it still was not specific enough. The outputs varied too much and lost the visual identity I was trying to establish.

What finally started working was using a small, deliberate set of references. I assembled a group of houses and selected four of them as a test case. That gave the generation process just enough variety to feel flexible, while still keeping the style grounded.

And for the first time, the results felt cohesive. The assets were not only usable, they looked like they belonged together.

Once I had that, the rest of the prototype came together surprisingly fast. I placed the houses into the scene, added colliders, overlaid them with the AI generated sprites, and paired the environment with a simple character controller and an isometric orthographic camera. Suddenly, the experiment stopped feeling theoretical. It was no longer just a collection of tests, it had become a small, functioning isometric game space.

CONCLUSION

What I found most interesting about this whole process is that AI did not replace the need for design decisions. If anything, it made those decisions more important. The breakthrough did not come from asking AI to do more. It came from giving it better constraints. The more structure I provided, the more useful the output became.

In that sense, the experiment was not really about whether AI can make a game on its own. It was about whether I could design a workflow where AI could succeed.

And that, I think, is the more honest way to look at AI in game development right now. The value is not in full automation. It is in building pipelines that balance procedural logic, human direction, and AI generation in a way that plays to the strengths of each. Code was easy because I could correct it. Art was hard because quality depends so heavily on consistency, taste, and controlled variation. What worked best was not pure generation, but a hybrid method, simple 3D forms for structure, AI generated 2D assets for presentation, and manual judgment to connect the two.

So, can you build a 2D game using only AI?

- Technically, yes. But the more interesting answer is that success does not come from letting AI handle everything. It comes from understanding where AI is strong, where it is weak, and how to shape the process around those limits.

That is what made this experiment worthwhile for me. I did not just end up with a small isometric environment. I came away with a much better understanding of how AI can actually fit into a real production pipeline, not as a replacement for craft, but as a tool that becomes powerful when given the right structure.

Lukasz Rynski - Head of Interactive at Koffeecup